In January 2026, D-Wave Quantum Inc. announced something that quantum computing experts had been calling impossible for years: they had figured out how to control thousands of qubits without drowning the system in a spaghetti mess of wiring. The same week, researchers published a new error correction method that barely slows down as you add more qubits. A month earlier, Google demonstrated that quantum systems can actually become more stable as they scale, not more fragile. Each of these achievements had been written off as theoretical at best, pipe dream at worst. And yet here we are.

The pattern raises a question worth taking seriously: why does a field keep outrunning its own expert consensus? The answer reveals something fundamental about both the technology and the nature of scientific progress itself.

The Wiring Problem That Couldn't Be Solved

The challenge D-Wave tackled sounds almost mundane: wiring. Every qubit in a quantum computer needs to be individually controlled, and that control requires physical connections. As you add more qubits, you add more wires. At some point, the physics simply stops cooperating. The wires generate heat. They take up space. They interfere with each other. Building a quantum computer with millions of qubits using conventional approaches would require a cooling system the size of a building and more control wires than could physically fit.

For years, the consensus held that this was an engineering problem without an engineering solution. You could make qubits better. You could make them more stable. But one wire per qubit appeared to be a hard physical constraint, and that meant quantum computers would never scale to truly useful sizes.

D-Wave's breakthrough, led by chief technology officer Mark Johnson, came from refusing to accept this constraint. Using multiplexed digital-to-analog converters, their team demonstrated control of tens of thousands of qubits with just 200 bias wires. They achieved this by integrating a high-coherence fluxonium qubit chip with a multilayer control chip using superconducting bump bonding. Key components were fabricated at NASA's Jet Propulsion Laboratory.

The technical details matter less than the conceptual shift. D-Wave didn't solve the wiring problem by making better wires. They solved it by redefining what "controlling a qubit" means at the hardware level, sidestepping the constraint rather than pushing through it.

When Errors Fix Themselves

Quantum computers make mistakes. This isn't a bug in the design; it's a fundamental feature of working with quantum states. Qubits exist in superposition, meaning they hold multiple values simultaneously until measured. But that delicate quantum state can collapse, or "decohere," when it interacts with its environment. Heat, electromagnetic radiation, even vibrations can introduce errors.

The standard approach to this problem borrows from classical computing: error correction codes. You spread information across multiple physical qubits to create logical qubits that can detect and fix errors. The catch is that error correction itself requires computation, and that computation takes time. As you scale up the number of qubits, the error correction overhead was supposed to scale up too, potentially faster than the useful computation you were trying to protect.

Researchers at multiple institutions have now demonstrated that this scaling curse can be broken. A team published results in early January 2026 showing error correction where "the time needed for error-correction computation barely increases even as the number of components grows." They achieved both "ultimate accuracy" and "ultra-fast computational efficiency" simultaneously, which the field had long assumed required tradeoffs.

IBM contributed to this progress by achieving efficient quantum error correction decoding with a ten-times speedup over the previous leading approach, completed a full year ahead of their roadmap. Their system can decode errors in real-time, in less than 480 nanoseconds, using what are called quantum low-density parity-check codes.

The significance here isn't just faster error correction. It's that the overhead problem turned out to be an artifact of how researchers were thinking about decoding, not a constraint imposed by quantum mechanics. The ceiling was in the algorithm, not the physics.

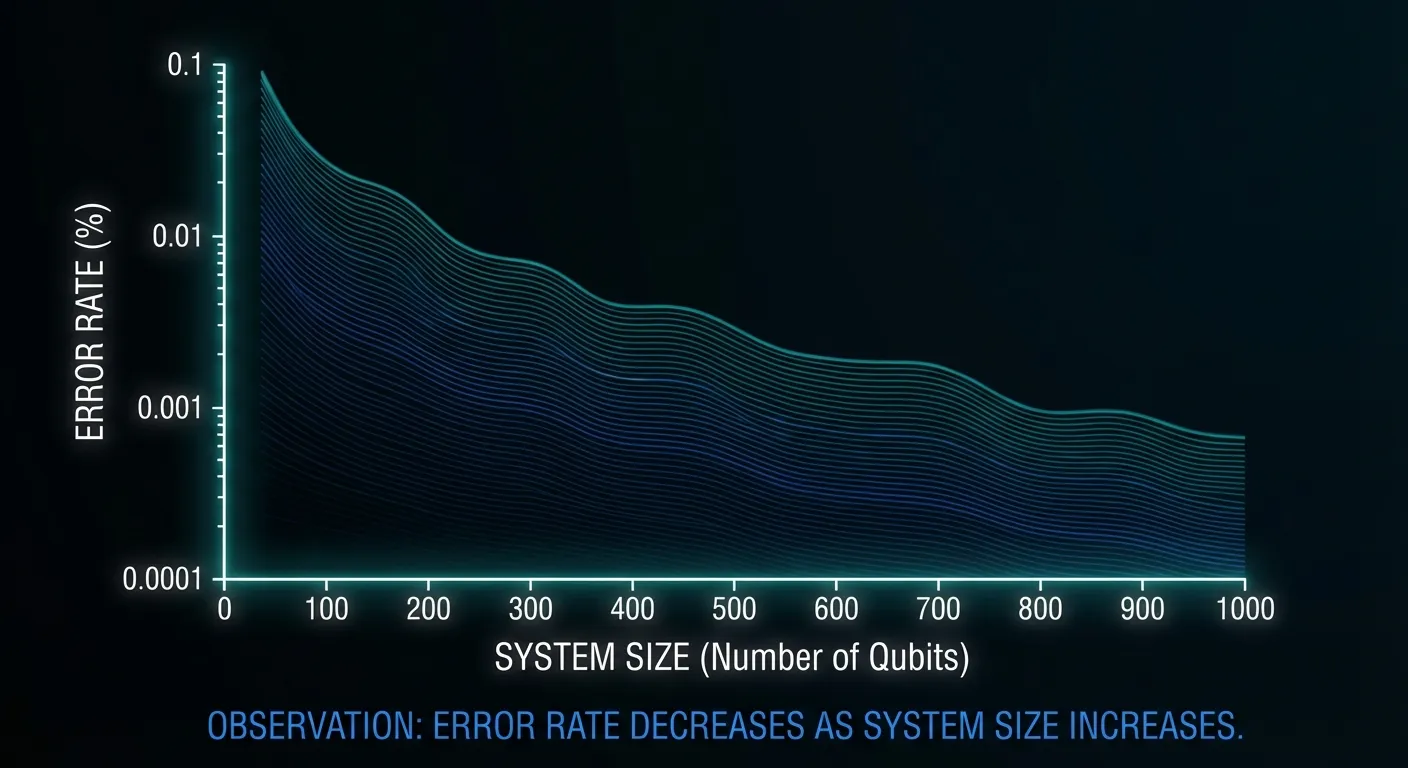

Google's Paradox: Bigger Is Better

Perhaps the most counterintuitive breakthrough came from Google DeepMind's AlphaQubit system. In classical engineering, larger systems are generally harder to control and more prone to failure. A car with more parts has more potential points of breakdown. A longer supply chain has more potential disruptions. Scale introduces fragility.

Quantum systems were assumed to follow this pattern even more severely. Quantum states are delicate. More qubits should mean more opportunities for decoherence, more chances for errors to cascade, more ways for the system to collapse into uselessness. Building bigger quantum computers should make the stability problem worse, not better.

Google demonstrated the opposite. A team led by Hartmut Neven, head of Google Quantum AI, showed that their quantum system actually becomes more stable as it scales. With each step up in the "distance" of the error-correcting code (from distance 3 to 5 to 7), the logical error rate dropped exponentially, showing a scaling factor of 2.14x. AlphaQubit achieved a 30% reduction in errors compared to the best traditional algorithmic decoders.

This result marks what researchers call the end of the "Noisy Intermediate-Scale Quantum" (NISQ) era. For years, quantum computers existed in a twilight zone: too noisy for reliable computation but too interesting to abandon. The assumption was that we'd need revolutionary new approaches to escape NISQ and reach fault-tolerant quantum computing. Instead, incremental improvements in error correction crossed a threshold that changed the fundamental scaling behavior.

Why Experts Keep Being Wrong

The pattern of quantum computing breakthroughs contradicting expert predictions isn't random. It reflects something systematic about how we reason about new technologies, especially technologies that operate according to different rules than our everyday experience.

Human intuition evolved in a classical world. We understand that things can be in one place or another, not both. We know that observing something doesn't change it. We expect larger systems to be harder to manage. Quantum mechanics violates all of these intuitions. Superposition lets quantum states be multiple things at once. Measurement fundamentally alters quantum systems. And entanglement creates correlations that have no classical analogue.

When experts predict what's possible in quantum computing, they're often reasoning by analogy to classical systems. In classical computing, you really do need individual connections to individual components. In classical error correction, there really are tradeoffs between accuracy and speed. And large classical systems really are harder to control. These analogies feel natural, but quantum mechanics doesn't respect them.

The deeper issue is that expertise can become its own trap. The mental models that make someone effective in one domain become invisible assumptions when applied to another. A classical engineer who internalizes "bigger means more fragile" will see fragility as inevitable in large quantum systems, not because the physics demands it but because the analogy feels self-evident. Each of the recent breakthroughs came from researchers who recognized a classical assumption masquerading as a quantum law and then discarded it.

This doesn't mean all expert skepticism is wrong. Some problems in quantum computing may prove genuinely intractable. But the track record suggests that when an expert says something is impossible in quantum computing, the most productive response is to ask: impossible according to which set of assumptions?

The Road to Quantum Advantage

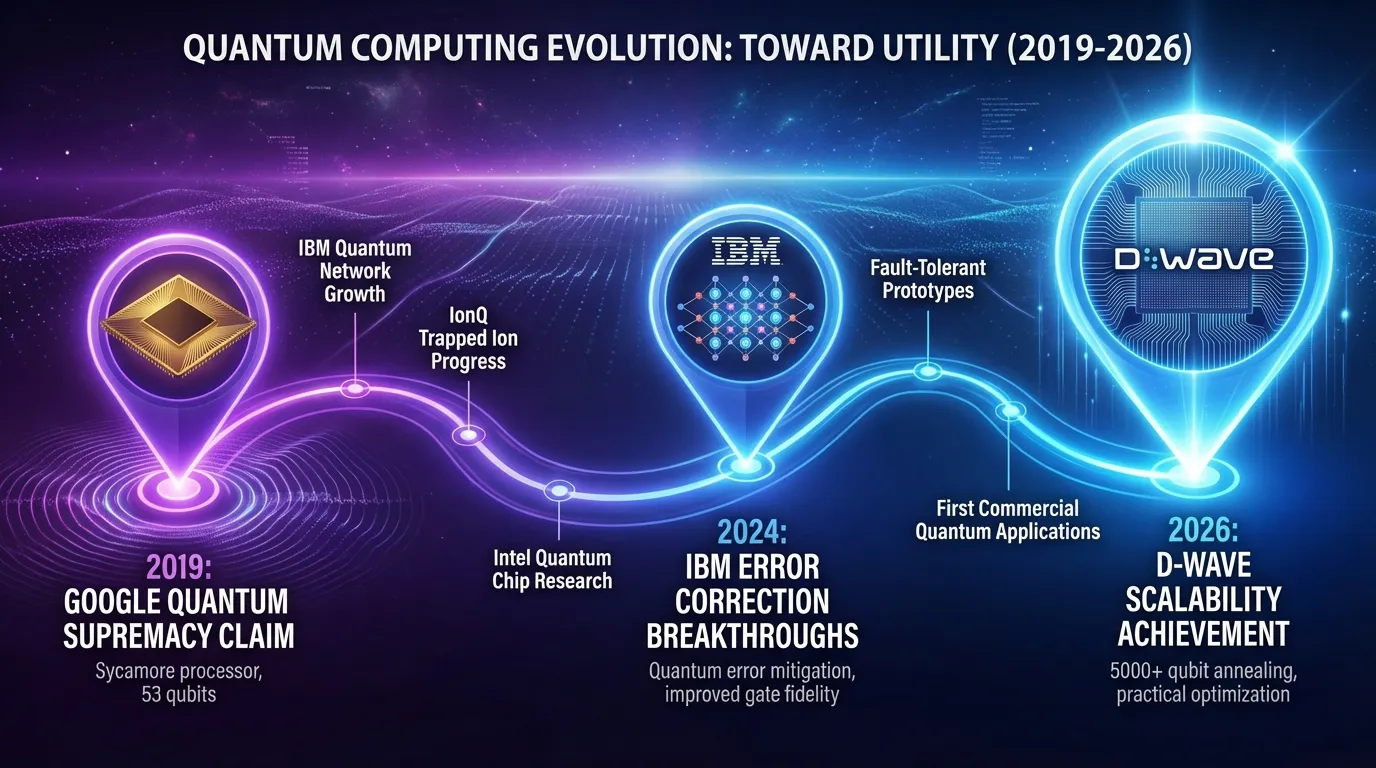

IBM now predicts that the first cases of verified quantum advantage will be confirmed by the wider community by the end of 2026. This is a specific, measurable claim: that quantum computers will demonstrably solve certain problems faster than any classical computer could.

Previous claims of quantum advantage have been controversial. Google's 2019 supremacy demonstration was criticized because the problem it solved was specifically designed to favor quantum computers rather than being practically useful. D-Wave's 2024 claim about simulating magnetic materials faced similar scrutiny. Skeptics argued that these demonstrations showed quantum computers could do quantum things quickly, but didn't prove they could do useful things faster than classical alternatives.

The 2026 predictions are different because they're backed by concrete technical milestones. IBM expects future iterations of its Nighthawk processor to deliver up to 7,500 gates by the end of the year. Microsoft, in collaboration with Atom Computing, plans to deliver an error-corrected quantum computer to Denmark. QuEra has delivered a quantum machine ready for error correction to Japan's AIST and is making it available to global customers.

The shift from research demonstrations to commercial deployments represents a maturation of the field. These aren't experiments showing what might be possible. They're products designed to solve real problems for paying customers.

Cross-Disciplinary Connections

The quantum computing story connects to broader patterns in scientific and technological progress. In 1895, Lord Kelvin declared that "heavier-than-air flying machines are impossible." Eight years later, the Wright brothers proved him wrong. The sound barrier was once considered a physical limit on aircraft speed; it turned out to be a problem of aircraft design. In both cases, the apparent impossibility dissolved once someone reframed the problem rather than pushing harder against the original formulation.

But there is an important difference between quantum computing and these earlier examples. Flight and supersonic travel operate in the same physical regime as everyday human experience. Engineers could build intuition by watching birds, studying airflow, and running wind tunnel tests. Quantum mechanics operates in a regime that no human has ever directly experienced. Superposition, entanglement, and measurement-induced collapse have no analogues in daily life. This means that the gap between expert intuition and physical reality is unusually wide in quantum computing, which may explain why the field produces an unusually high rate of "impossible" results.

The connection to artificial intelligence is particularly striking here. Google's AlphaQubit uses machine learning to decode errors more effectively than traditional algorithmic approaches, and the reason may be precisely that neural networks don't carry classical intuitions. A machine learning system trained on quantum data can discover patterns that a human physicist, burdened by classical analogies, would never think to look for. The intersection of AI systems that learn physics by watching and quantum computing may accelerate progress in both fields, with each domain providing insights that the other's practitioners would find counterintuitive.

The Deeper Question

The quantum computing breakthroughs of early 2026 represent more than technical achievements. They mark a transition from "can quantum computers work?" to "what can quantum computers do?" This is the same transition that personal computers made in the 1980s, that the internet made in the 1990s, and that AI has been making in recent years.

We still don't know exactly what quantum computers will be best at. Optimization problems, drug discovery, materials science, and cryptography are the usual suspects, but the most important applications may be ones we haven't imagined yet. The same was true of classical computers: no one in 1946 predicted social media or smartphone apps. The state of space exploration in 2026 shows similar patterns of rapid advancement defying earlier projections.

The more interesting question may be what quantum computing reveals about how technologies mature. The field spent years in a phase where the obstacles felt definitional, where the challenges weren't bugs to fix but features of reality to accept. The transition out of that phase happened not through one eureka moment but through a cascade of reframings, each of which made the next more plausible. Once D-Wave showed that a hardware assumption could be sidestepped, it became easier to ask whether other "fundamental" limits were also assumption-dependent. The recent plasma physics breakthrough that surpassed the Greenwald limit for fusion energy offers a parallel example of a supposedly fundamental barrier falling to creative engineering.

This cascading pattern suggests that the next few years in quantum computing may be shaped less by any single technical milestone than by a shift in the field's collective imagination. When a community stops treating its obstacles as laws of nature and starts treating them as design choices, progress tends to accelerate in ways that surprise even the optimists. Some problems will remain genuinely hard. But the most productive stance toward quantum computing's future may be the one its most successful researchers have already adopted: deep respect for the physics, combined with deep skepticism toward any claim about what the physics forbids.

The age of practical quantum computing isn't just approaching. By multiple measures, it's already here.

Sources

- D-Wave demonstrates first scalable, on-chip cryogenic control of gate-model qubits - D-Wave press release on multiplexed qubit control breakthrough

- Quantum error correction below the surface code threshold - Nature paper on exponential error suppression in surface codes

- Introducing Relay-BP: real-time quantum LDPC decoding - IBM Quantum blog on real-time error correction decoding with 10x speedup

- IBM lays out clear path to fault-tolerant quantum computing - IBM roadmap for quantum advantage and fault tolerance