Every few decades, a technology standard emerges that seems boring on the surface but transforms everything it touches. USB replaced a chaos of proprietary connectors with a universal interface. HTTP made it possible for any computer to talk to any web server. TCP/IP created the language that allowed the internet to exist. In 2025, a new standard called the Model Context Protocol, or MCP, is positioning itself to play a similar role for artificial intelligence.

The analogy that has stuck compares MCP to USB-C: one universal connector replacing a chaos of incompatible cables. But the comparison undersells what is at stake. USB-C simplified hardware. MCP could determine whether the AI industry develops as an open ecosystem or fragments into walled gardens controlled by a handful of companies. The implications extend well beyond developer convenience, into competition policy, user autonomy, and the distribution of power in a technology that is reshaping every industry it touches.

The Problem MCP Solves

To understand why MCP matters, you need to understand a fundamental limitation of current AI systems. Large language models like GPT and Claude are trained on massive datasets, but that training has a cutoff date. They cannot access new information unless someone updates their training. More importantly, they cannot access private data, your emails, your calendar, your company's internal documents, unless someone builds a specific connection.

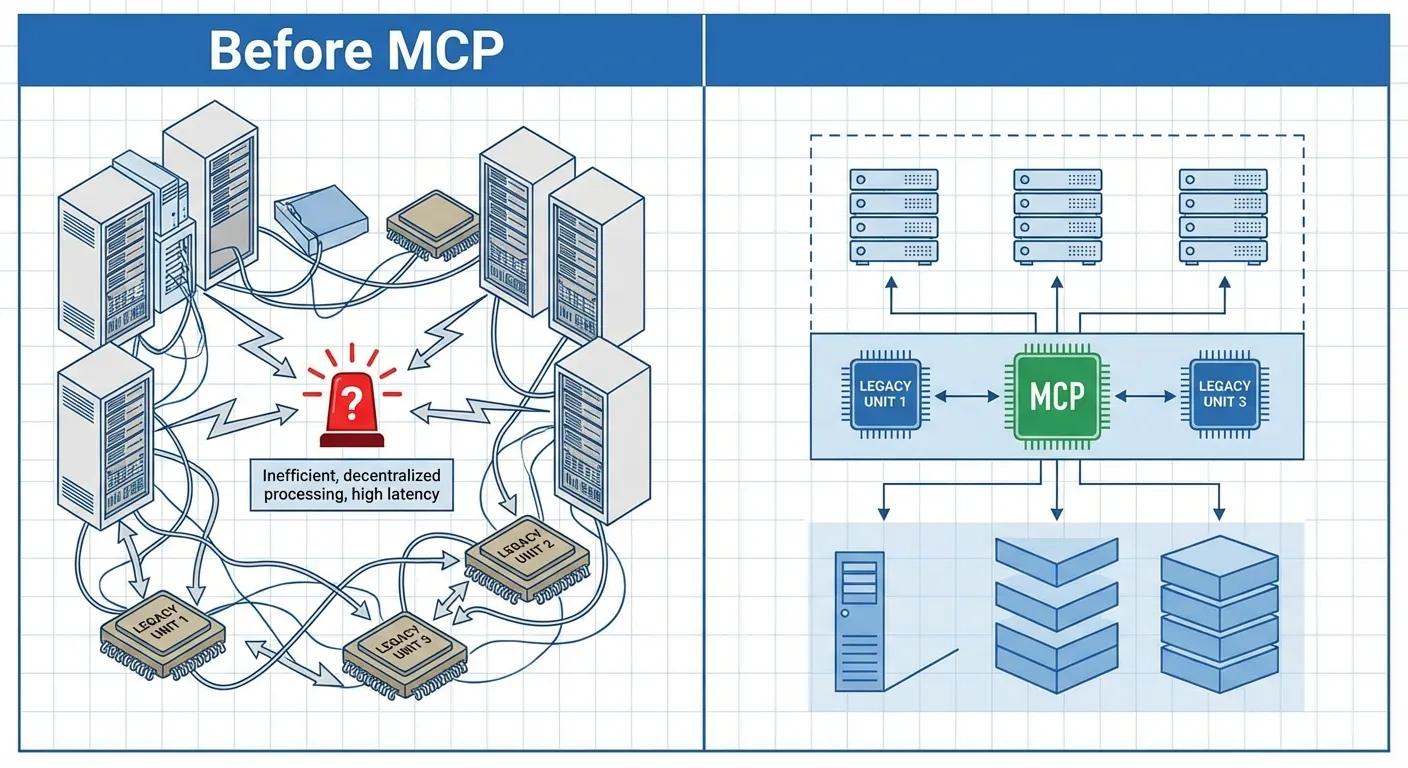

This creates what engineers call the "many-to-many problem." There are dozens of AI models from different companies. There are thousands of tools and data sources those models might need to access. If each model needs a custom integration for each tool, you end up with an exponentially growing number of integrations. It becomes unmanageable.

Previous solutions involved building "plugins" or "agents" for specific AI platforms. ChatGPT has plugins; Claude has tools. But these are platform-specific. A plugin built for ChatGPT does not work with Claude or Gemini. Developers who want to make their tools available to AI users must build separate integrations for each platform, multiplying their workload and limiting adoption.

How MCP Works

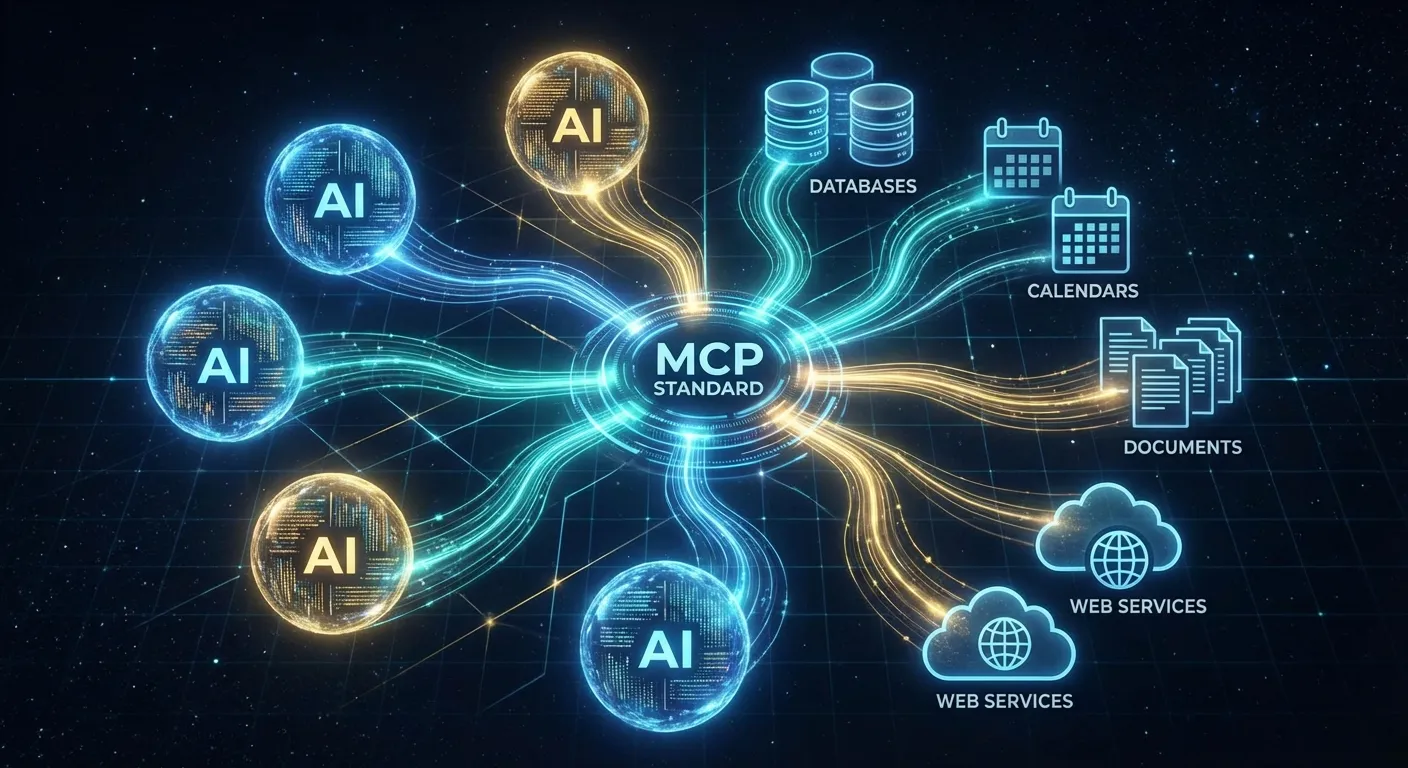

MCP provides a standardized way for AI models to request information and take actions through external systems. Instead of each model needing its own API integration, developers can build a single MCP server that any compatible AI model can use. The model sends a request in MCP format; the server translates that into whatever actions are needed; results flow back through the same channel.

The protocol handles the messy details that make integrations difficult: authentication, error handling, rate limiting, and formatting. A developer who builds an MCP server for, say, a calendar application does not need to worry about how different AI models will access it. They build once, and any MCP-compatible AI can use it.

From the AI model's perspective, MCP provides a consistent interface to the external world. The model does not need to know whether it is accessing a calendar, a database, a web API, or a filesystem. It sends MCP requests and receives MCP responses. This abstraction layer makes models more flexible and reduces the complexity of building AI-powered applications.

The Ecosystem Taking Shape

Since MCP emerged in late 2024, adoption has accelerated rapidly. Major AI companies have added MCP support to their models. Tool developers have created MCP servers for popular services. An open-source ecosystem has sprung up, with developers sharing implementations and building on each other's work.

The effect is a kind of network effect familiar from previous platform standards. As more models support MCP, developers have more incentive to build MCP servers. As more servers become available, models that support MCP become more capable. This virtuous cycle can rapidly establish a standard as the default choice, even in competitive markets.

Google's announcement in April 2025 of the Agent-to-Agent protocol (A2A) adds another layer. While MCP connects models to tools, A2A connects models to each other, allowing AI systems to collaborate on complex tasks. Together, these protocols are creating an infrastructure layer for AI that does not belong to any single company.

Why Standards Matter More Than They Seem

The history of technology is largely a history of standards. Standards are not glamorous, but they determine who wins and who loses, what becomes possible and what remains impractical. The company or community that controls a key standard often controls the trajectory of an entire industry.

Consider the web. Tim Berners-Lee did not invent the most sophisticated hypertext system; he invented one that was simple enough to standardize. HTTP and HTML became universal because anyone could implement them. This openness allowed the web to grow explosively, but it also meant no single company could control it. Microsoft, despite its dominance of desktop computing, could never own the web.

MCP represents a similar moment for AI. If MCP becomes the universal standard for AI-to-tool integration, it will be harder for any single AI company to lock in users through proprietary integrations. Your tools and data become portable. Switching from one AI model to another becomes easier. Competition increases, innovation accelerates, and users gain power.

The Stakes for AI Development

The emergence of MCP comes at a critical moment in AI development. The major AI labs are racing to build more capable models, investing billions in training runs and competing intensely for market share. Developments like world models that learn physics by watching video are expanding what AI systems can do. Regulators are paying attention. Users are wondering who will control these increasingly powerful systems.

MCP's significance in this environment is less about convenience than about market structure. In most technology cycles, the integration layer becomes the choke point. Whoever controls how systems connect to each other extracts value from everyone on both sides. Think of Apple's App Store, Google's ad network, or Amazon's marketplace fees. If AI integration remains proprietary, the platform operators who control those connections will set the terms for every business that depends on AI tools. MCP offers an alternative: a shared protocol that no single company owns, reducing the leverage any one platform can exert.

But standards can also be captured. Large companies sometimes embrace open standards early, gain dominant positions, and then extend those standards in proprietary ways. "Embrace, extend, extinguish" was Microsoft's strategy for dealing with internet standards in the 1990s. MCP's open-source license and the growing base of independent server implementations provide structural resistance to capture, but the developer community will need to actively defend open governance as corporate stakes increase.

The Fundamental Shift

MCP is easy to overlook. It is not a dramatic AI breakthrough like a model that can solve new problems or generate stunning images. It is infrastructure, plumbing, the kind of thing that works best when you do not notice it. But infrastructure shapes what can be built on top of it.

The evidence already points to MCP becoming the default integration layer for AI. Every major model provider now supports or is actively implementing the protocol. The open-source registry lists thousands of MCP servers, covering everything from calendar access to database queries to code repositories. Crucially, individual developers and small companies are building MCP servers at a pace that outstrips any single company's ability to create proprietary alternatives, replicating the same grassroots adoption pattern that established HTTP as the web's universal protocol.

The technical advantages reinforce this trajectory. MCP's simple request-response pattern means implementation costs are low. A developer can build a functional MCP server in hours, compared to weeks for platform-specific integrations. That asymmetry in effort creates a powerful incentive: every new tool built with MCP support immediately works with every compatible AI model, while a proprietary plugin works with exactly one.

As research shows that AI can already make us overconfident in our own abilities, standards that keep the ecosystem open become even more critical. The deeper question is whether MCP can maintain its neutrality as corporate stakes increase. History offers cautionary examples: Java was supposed to be platform-independent until Oracle's licensing decisions fractured the ecosystem; RSS was an open standard for content syndication until platform incentives made social media feeds more attractive. MCP's defense against a similar fate lies in the breadth of its adoption. With thousands of independently maintained servers already in production, no single company can fork the protocol without stranding most of the ecosystem. That distributed ownership, more than any license or governance charter, is what makes MCP a durable check on concentration in the AI industry.