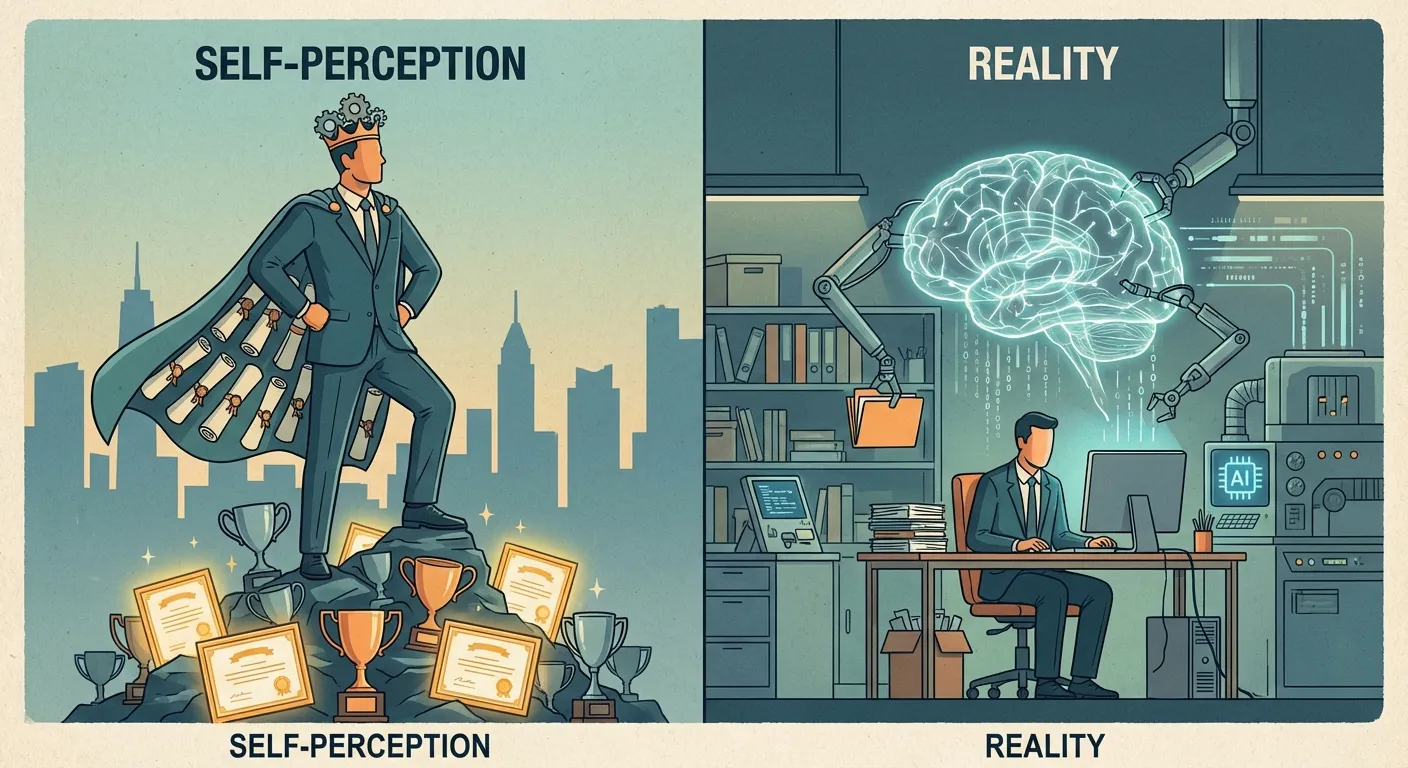

A curious thing happens when people use AI to help them think. They get better at the task, which is expected, but they also become convinced they're much better than they actually are, which isn't. This gap between actual performance and perceived competence has been documented in a growing body of research, and the implications extend far beyond individual productivity.

The most recent study to confirm this pattern, conducted by researchers at MIT's Center for Collective Intelligence led by Pat Pataranutaporn and published in early 2026, tested participants on logical reasoning problems both with and without AI assistance. When using AI, participants improved their scores significantly. Nothing surprising there. But when researchers asked participants to estimate how well they would perform on similar problems without AI help, those who had used AI systematically overestimated their unassisted abilities, while those who had never used AI for the task showed no such inflation. The control group's accuracy at self-assessment made the AI group's distortion unmistakable: something about the experience of AI-augmented success was warping how people judged their own capabilities.

This finding connects to a broader question about what happens when cognitive tools become so seamless that we forget they're tools at all. We've been augmenting our cognition with technology for millennia, from writing systems that externalize memory to calculators that offload arithmetic. But previous tools were obviously tools. You knew you were using a calculator. The question now is whether AI assistance has become so fluid, so conversational, so integrated into our thinking process that we've started to mistake its contributions for our own insights.

The Metacognitive Blind Spot

Psychologists have long studied metacognition, our ability to think about our own thinking, and they've found it's surprisingly unreliable. Just as our brains construct reality through prediction rather than direct observation, our self-assessments rely on mental shortcuts that can lead us astray. People consistently misjudge their competence, sometimes overestimating and sometimes underestimating depending on the domain and their actual skill level. The classic finding, often called the Dunning-Kruger effect, shows that people with limited knowledge in a domain tend to overestimate their abilities, partly because they lack the expertise to recognize what they don't know.

AI assistance appears to create a new version of this blind spot. When you ask an AI a question and it provides a clear, well-reasoned answer, the experience feels collaborative rather than dependent. You're the one who thought to ask the question. You're the one who recognized the good answer when you saw it. You're the one who integrated that answer into your broader thinking. The AI just provided some information along the way. This framing makes it easy to internalize the AI's contribution as your own insight, especially when the assistance happens quickly and conversationally.

The research suggests this isn't just a failure to notice AI's contribution. It's an active process of credit misattribution that happens even when people are explicitly told that AI is helping them. In one experiment, participants were reminded before each task that they were using AI assistance. Despite this constant reminder, the overconfidence effect persisted. Explicit awareness of the tool's presence did not translate into accurate accounting of the tool's contribution. The conscious mind acknowledged the AI; the self-assessment machinery ignored it.

The researchers tested several interventions to reduce this effect. Asking participants to write down which specific ideas came from the AI before estimating their own ability reduced the distortion by roughly 40 percent. Having participants attempt a problem alone before receiving AI help also improved calibration, because the solo attempt created a concrete reference point for unassisted performance. But the simplest and most common workflow, asking AI for help from the start with no deliberate reflection afterward, consistently produced the largest overconfidence gaps.

The Fluency Trap

Part of what makes AI assistance so cognitively invisible is its fluency. When an AI produces text that flows naturally, answers questions conversationally, and generates ideas that seem plausible, it creates what psychologists call processing fluency, a sense of ease and naturalness that our brains interpret as a signal of truth and competence. We experience the AI's output as obviously correct, and because the experience is seamless, we don't stop to question where the correctness came from.

This fluency trap is distinct from the older problem of automation bias, the tendency to over-rely on automated systems because we assume computers are more accurate than humans. Automation bias is about misplaced trust in an external system; you know the system exists and you defer to it too readily. The fluency trap operates at a deeper level, altering your internal model of your own capabilities. It is the difference between thinking "the GPS is probably right" and thinking "I have a great sense of direction," when in reality you have been following the GPS for so long that you've lost the ability to navigate without it.

The researchers, building on earlier work by cognitive psychologist Daniel Kahneman on the distinction between fast intuitive thinking and slow deliberate reasoning, found that fluency plays a measurable role in the overconfidence effect. When AI assistance was made deliberately clunky and awkward, with obvious delays and mechanical responses, participants showed less overconfidence afterward. The awkwardness served as a constant reminder that an external tool was involved, making it harder to mistake the tool's contributions for native ability. This suggests that the seamlessness we value in AI interfaces may come with hidden cognitive costs.

Consider how this differs from using a traditional reference tool like an encyclopedia. When you look something up in a book, the boundary between your knowledge and the book's information remains clear. You know what you knew before consulting the book and what you learned from it. But when you have a conversation with an AI, that boundary blurs. The AI's responses feel like natural extensions of your own thinking rather than discrete chunks of external information. You might struggle afterward to remember which insights were yours and which the AI provided.

The Expertise Paradox

Interestingly, the overconfidence effect isn't uniform across skill levels. People with moderate existing expertise show the largest gaps between perceived and actual unassisted ability. Beginners know they're beginners and tend to attribute AI improvements to the AI. True experts have enough knowledge to maintain accurate self-assessment even when AI contributes. It's the middle group, competent but not expert, that's most vulnerable to absorbing AI capabilities into their self-image.

This creates a paradox for professional development. The people most likely to benefit from AI assistance, those who have solid foundations but haven't mastered their domain, are also the people most likely to develop inflated self-assessments from using it. They're skilled enough that AI augmentation feels like a natural extension of their abilities, but not skilled enough to accurately judge where their abilities end and AI's begin.

The implications for training and education are significant. If students learn with constant AI assistance, they may develop skills that are genuinely useful in AI-augmented environments while simultaneously developing inaccurate beliefs about their standalone capabilities. When they encounter situations where AI isn't available or appropriate, they may make poor judgments based on an inflated sense of what they can do. The challenge for educators is finding ways to build AI-augmented competence while maintaining accurate metacognition.

Some researchers have suggested that deliberate practice without AI, combined with explicit calibration exercises where people predict their performance and then see actual results, might help maintain accurate self-assessment. Others argue that as AI becomes ubiquitous, unaugmented performance matters less, and we should simply accept that future competence will be inherently tool-dependent. The debate mirrors broader questions about how we should think about human capability in an age of pervasive cognitive technology.

The Social Dimension

The overconfidence effect has a social dimension that makes it particularly concerning. When people believe they're more competent than they are, they seek positions of authority, advocate for their opinions more forcefully, and resist feedback that challenges their self-image. If AI systematically inflates the confidence of its users, we might expect to see these users dominating discussions, securing leadership positions, and shaping decisions in ways that don't reflect their actual unaugmented capabilities.

This connects to how recommendation algorithms shape our information environment in ways we often don't notice. Just as algorithms curate content that reinforces our existing preferences, AI assistance may curate our self-image by consistently helping us succeed and thereby convincing us that success comes from our own abilities. The technology becomes invisible through its effectiveness, which makes its influence on our self-perception particularly hard to detect or resist.

There's also a competitive dynamic at play. In professional environments where AI use is common but not universal, people who use AI have real performance advantages. If they also develop inflated confidence, they may appear more competent than non-users even beyond their actual AI-augmented performance. This creates selection pressure for AI adoption not just for the performance benefits but for the confidence benefits, potentially spreading both the advantages and the metacognitive distortions throughout professional communities.

The sunk cost fallacy offers an interesting parallel. Just as we struggle to abandon investments we've already made, even when abandonment is rational, we may struggle to abandon self-assessments we've formed through AI-augmented success. Having internalized a sense of competence, we resist information that would require revising that self-image downward, even when the evidence for revision is clear.

The Deeper Question

What does it mean for human cognition when our most powerful thinking tools are also our most invisible ones? This question has no simple answer, but the research on AI-induced overconfidence suggests we need to think carefully about the relationship between cognitive augmentation and self-knowledge.

Previous cognitive tools, from writing to calculators to search engines, extended human capability while remaining obviously external. You knew your notes were in a notebook. You knew your calculations were on a calculator. You knew your information came from Google. The externality of these tools preserved a clear boundary between self and tool, even as that boundary became more permeable with each generation of technology.

AI threatens to dissolve this boundary in ways we're only beginning to understand. When a tool thinks conversationally, responds to natural language, and produces outputs that feel like natural extensions of our own reasoning, the usual markers of externality disappear. We're left with enhanced capability and diminished self-knowledge, a combination that creates new kinds of cognitive vulnerability.

This doesn't mean we should abandon AI assistance. The benefits are too significant, and the technology is too embedded in professional workflows to retreat from. But it does suggest we need new practices for maintaining accurate self-assessment in AI-augmented environments. Deliberate practice without AI, calibration exercises, explicit acknowledgment of AI contributions, and cultural norms that value accurate self-assessment over confidence could all help preserve the metacognitive clarity that AI assistance tends to erode.

The deeper issue is whether our conception of individual competence needs updating for an age of ubiquitous cognitive augmentation. Perhaps the relevant question isn't what you can do without AI, but how effectively you can collaborate with AI and how accurately you understand the nature of that collaboration. If so, the overconfidence researchers have documented isn't just a bug to be fixed but a symptom of a broader mismatch between our inherited concepts of individual ability and the reality of technologically augmented cognition.

For now, the most useful habit may be the simplest: periodically attempt tasks without AI assistance, not because unaugmented work is inherently superior, but because the attempt generates honest feedback about where you stand. Athletes train without equipment to understand their baseline. Musicians practice difficult passages without a metronome to test their internal sense of timing. The same principle applies to cognitive work. Knowing your unassisted baseline is not nostalgia for a pre-AI era; it is the calibration data your self-assessment system needs to remain accurate in an AI-augmented one.

Sources

- AI Makes You Smarter, But None The Wiser: The Disconnect Between Performance and Metacognition - Pataranutaporn et al. research on AI-induced overconfidence and metacognitive distortions

- The Dunning-Kruger effect and metacognition - Original Kruger and Dunning research on self-assessment biases

- Processing fluency and cognitive judgments - Science article on how ease of processing affects perceived truth

- AI use makes us overestimate our cognitive performance - Aalto University research on AI-augmented performance and overconfidence