When you swing a bat at a baseball, your brain performs a calculation that would humble most computers. In roughly 400 milliseconds, it processes the ball's trajectory, predicts its future position, coordinates dozens of muscles, and adjusts for wind, spin, and your own momentum. It does all this while consuming about 20 watts of power, roughly what it takes to run a dim light bulb. A supercomputer solving the same fluid dynamics and trajectory equations might need megawatts. This asymmetry, the fact that biological brains handle physics effortlessly while digital computers struggle expensively, has haunted computer science for decades. Now researchers at Sandia National Laboratories have demonstrated something that most experts thought was impossible: neuromorphic computers, machines built to mimic the brain's architecture, can solve the very equations that underpin physics simulations. The work, published in Nature Machine Intelligence, doesn't just offer a faster chip. It suggests that the brain has been doing math all along, and we're only now learning to read its notation.

"You can solve real physics problems with brain-like computation," said James B. Aimone, a computational neuroscientist at Sandia. "That's something you wouldn't expect because people's intuition goes the opposite way."

The Energy Problem Nobody Talks About

Modern science runs on simulation. Climate models, nuclear stockpile assessments, aircraft design, drug molecular dynamics: all depend on solving partial differential equations, the mathematical language that describes how physical quantities change across space and time. The machines that solve these equations, traditional supercomputers, are engineering marvels. They're also environmental disasters in slow motion.

The world's top supercomputers consume electricity measured in tens of megawatts. Frontier, the first exascale machine at Oak Ridge National Laboratory, draws over 20 megawatts when running at full capacity. That's enough to power roughly 16,000 American homes. And demand is growing. As simulations become more detailed and as fields from climate science to genomics require higher resolution, the computational appetite keeps expanding. The Department of Energy, which funds many of these machines through the National Nuclear Security Administration, has been searching for alternatives for years.

The energy equation gets worse when you consider where computation is heading. Edge computing, autonomous vehicles, battlefield sensors, and space probes all need physics-capable processing in environments where you can't plug into a power grid. A Mars rover can't run a fluid dynamics simulation on a supercomputer back on Earth, not with a 20-minute communication delay. If machines are going to think about physics in real time, in real environments, they need to do it cheaply.

This is the gap neuromorphic computing was designed to fill. But until recently, brain-inspired chips were limited to pattern recognition tasks: identifying faces, classifying sounds, filtering anomalies. Nobody had shown they could handle the rigorous mathematical formalism that physics demands. The assumption was that spiking neural networks, which communicate through discrete electrical pulses rather than continuous signals, simply weren't precise enough for differential equations.

Theilman and Aimone proved that assumption wrong.

How Brain-Inspired Chips Actually Work

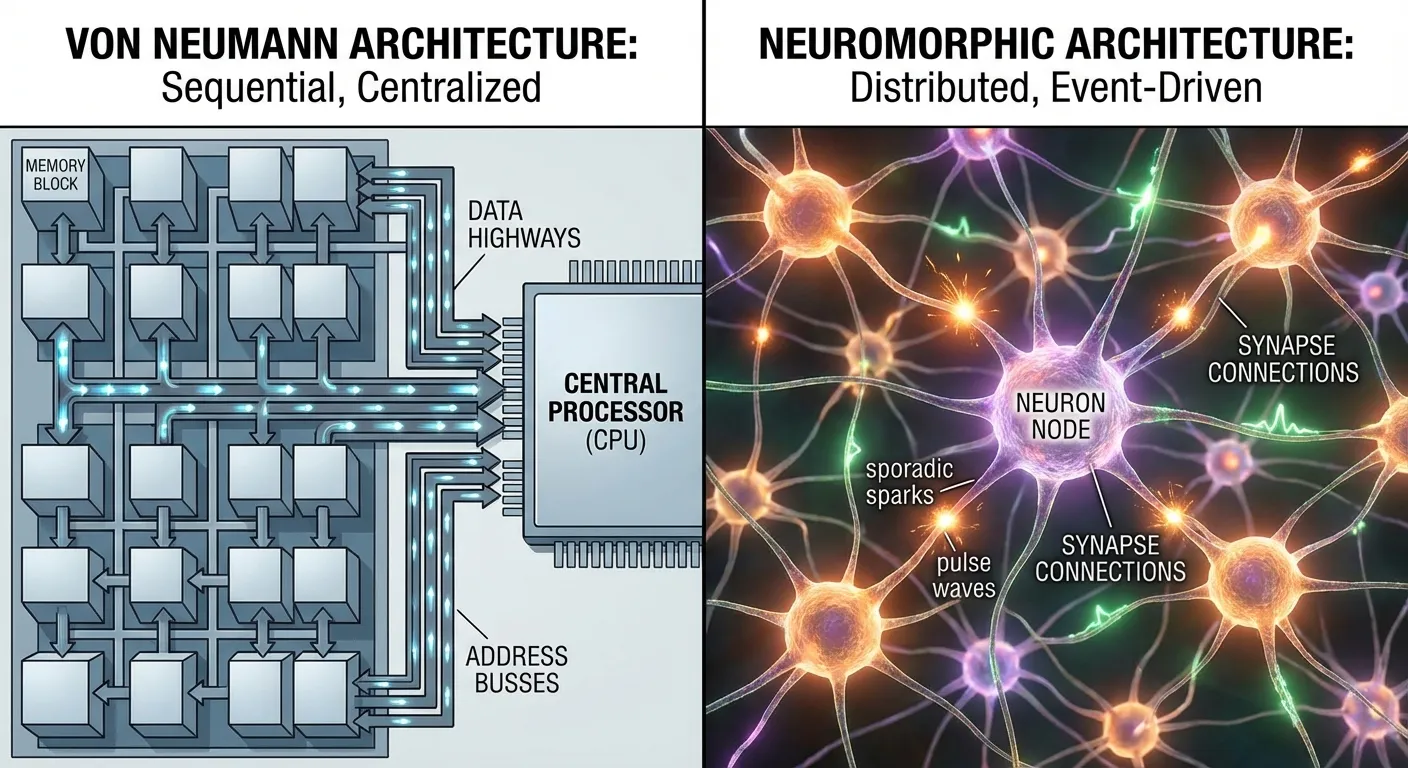

To understand why the Sandia breakthrough matters, you need to understand how neuromorphic chips differ from conventional processors. A standard computer chip, whether it's the CPU in your laptop or a node in a supercomputer, processes information through sequential logic gates. Data moves from memory to processor, gets transformed according to instructions, and moves back. This architecture, fundamentally unchanged since John von Neumann described it in 1945, separates storage from computation. It's powerful, flexible, and energy-hungry, because every operation requires shuttling data back and forth across the chip.

Neuromorphic chips abandon this model entirely. Instead of logic gates and memory banks, they use artificial neurons connected by artificial synapses. Information is encoded not as binary digits but as patterns of electrical spikes, precisely how biological neurons communicate. Intel's latest neuromorphic chip, Loihi 3, fabricated on a 4-nanometer process, packs 8 million digital neurons and 64 billion synapses onto a single piece of silicon. That's an eightfold increase in density over its predecessor, approaching the complexity of a small mammalian brain structure.

The key advantage isn't raw speed but efficiency. In a neuromorphic system, neurons only fire when they have something to communicate. Inactive neurons consume essentially zero power. This "event-driven" processing mirrors the brain's own energy management: your visual cortex doesn't process every pixel in your visual field at all times, it activates in response to change and relevance. Recent benchmarks have shown neuromorphic systems achieving 70 times faster performance and 5,600 times greater energy efficiency than GPU-based systems for certain continuous learning tasks. The ANYmal D Neuro, a quadruped inspection robot running on Intel's Loihi 3, demonstrated 72 hours of continuous operation on a single battery charge, nine times longer than previous GPU-powered versions.

But all of this efficiency meant nothing if the chips couldn't handle serious mathematics. Pattern recognition is useful. Solving the Navier-Stokes equations that govern fluid dynamics, the Maxwell equations that describe electromagnetic fields, or the structural mechanics equations that predict whether a bridge will hold: that's a different universe of computational challenge.

The PDE Breakthrough

Brad Theilman's insight was recognizing a hidden mathematical relationship. Spiking neural networks, it turns out, have a "natural but non-obvious link" to partial differential equations. The way that voltage builds up in an artificial neuron, reaches a threshold, fires, and resets follows mathematical dynamics that can be mapped onto the same frameworks used to solve PDEs. The connection had been sitting in plain sight, obscured by the fact that neuroscientists and applied mathematicians rarely attend the same conferences.

"We've shown the model has a natural but non-obvious link to PDEs, and that link hasn't been made until now," Theilman explained. The algorithm he developed doesn't force a neural network to approximate equations the way traditional numerical methods do. Instead, it exploits the inherent dynamics of spiking neurons, using their natural voltage fluctuations as a computational substrate. The physics, in a sense, is already happening inside the chip. Theilman's contribution was showing how to harness it.

The approach worked on the kinds of equations that matter most to national security and engineering: fluid dynamics simulations, electromagnetic field calculations, and structural mechanics problems. These are the workhorses of computational physics, the equations that the National Nuclear Security Administration uses to maintain the nuclear deterrent without underground testing, and that aerospace engineers use to design aircraft without building every prototype.

What makes this more than an incremental improvement is the energy implication. If neuromorphic hardware can solve PDEs at even a fraction of the efficiency it demonstrates on pattern recognition tasks, the potential savings are staggering. A neuromorphic system using one-thousandth the power of a traditional supercomputer to solve the same equations would transform which problems science can afford to tackle. Climate simulations that currently require months of supercomputer time might become feasible on smaller, dedicated neuromorphic clusters. Real-time physics computation on autonomous vehicles and robots would become practical rather than aspirational.

The Convergence Pattern

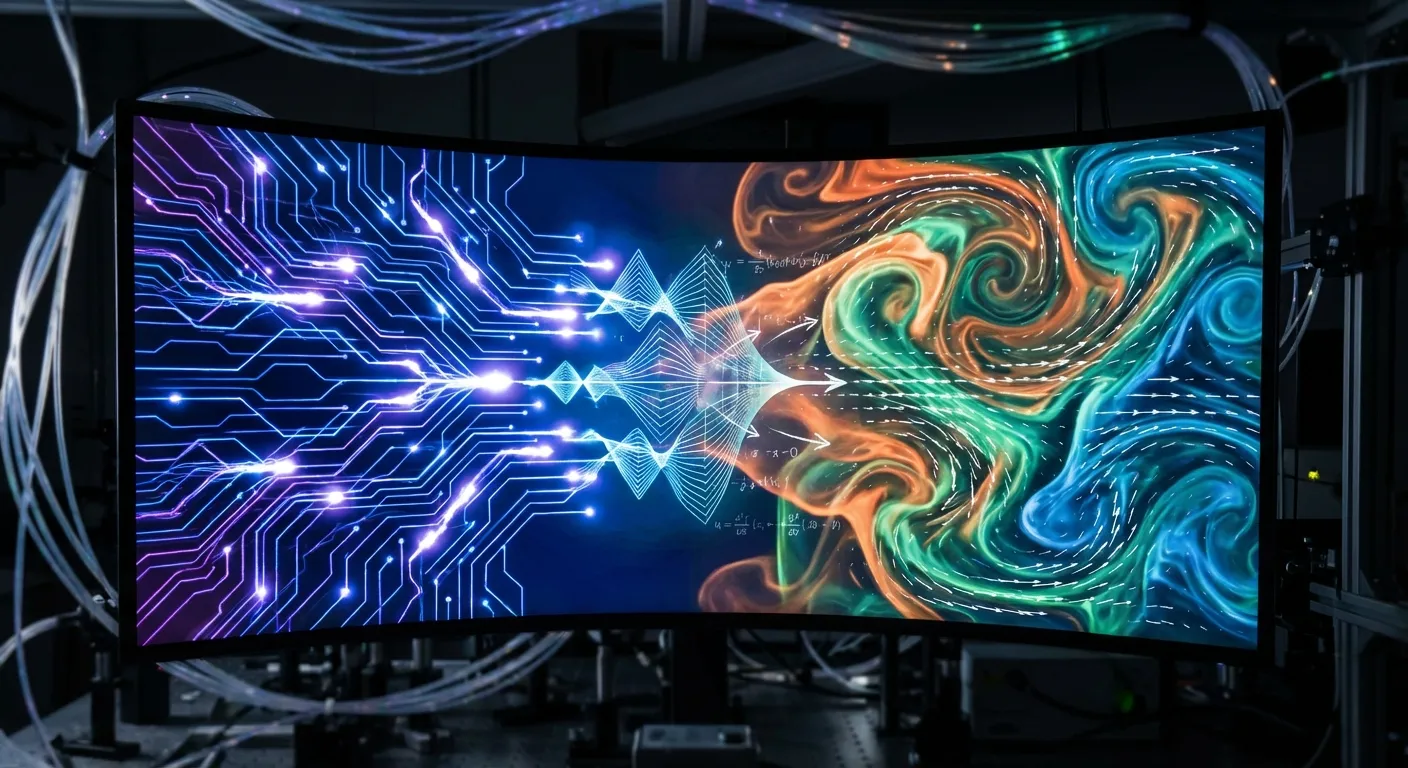

There's a deeper pattern at work here that extends beyond any single breakthrough. For most of computing's history, progress meant making the von Neumann architecture faster: smaller transistors, higher clock speeds, more cores. Quantum computing represents one departure from that playbook, attacking certain problems through fundamentally different physics. Neuromorphic computing represents another, attacking the efficiency problem by abandoning the architecture entirely.

What's striking is that both alternative paradigms draw inspiration from nature. Quantum computers exploit the superposition and entanglement that govern subatomic particles. Neuromorphic computers exploit the spiking dynamics that govern biological neurons. In both cases, the innovation isn't purely engineering. It's recognizing that natural systems already solve certain problems efficiently, and that the right approach is to build hardware that embodies those natural solutions rather than simulating them on conventional machines.

This represents a philosophical shift in how we think about computation. The von Neumann model treats computing as instruction execution: tell the machine exactly what to do, step by step. Neuromorphic computing treats it as dynamics: set up the right physical system and let the solution emerge from its natural behavior. The difference is analogous to the gap between sculpting a wave out of clay and building a tank of water where waves form on their own. Theilman and Aimone's breakthrough suggests that for physics problems specifically, the second approach might be not just more efficient but more natural, because the math that describes neuron dynamics and the math that describes physical systems share the same deep structure.

This isn't entirely surprising when you consider evolution's track record. Brains evolved to model physics, to predict where a thrown rock will land, to estimate whether a branch will support a body's weight, to calculate the trajectory of a lunging predator. As researchers studying AI's ability to learn physics from observation have noted, biological neural networks are fundamentally physics engines. The Sandia team's contribution is showing that artificial neural networks preserve that capability, if you know where to look.

What This Means

The path from laboratory demonstration to practical deployment is long. Theilman and Aimone solved PDEs on neuromorphic hardware, but they haven't yet shown that the approach scales to the full complexity of real-world simulations, where millions of interacting variables must be tracked simultaneously. The algorithm needs to be tested on increasingly difficult problems, and the hardware needs to mature beyond current neuromorphic platforms.

But the conceptual barrier has been broken. The assumption that brain-inspired chips were limited to perception tasks, that they couldn't handle the mathematical rigor of physics, has been definitively refuted. Aimone framed the implication in characteristically ambitious terms: the work could "open the door to the first neuromorphic supercomputer." If that door does open, the consequences extend beyond faster or cheaper computation. A neuromorphic supercomputer would process physics the way a brain does, through emergent dynamics rather than brute-force calculation, potentially revealing connections between physical systems that sequential computation obscures.

There's also a tantalizing feedback loop. Understanding how neuromorphic chips solve physics problems might teach us something about how biological brains do it. Aimone noted that the research connects neuroscience with applied mathematics in ways that could eventually inform treatment of neurological disorders like Alzheimer's and Parkinson's. If spiking neurons and differential equations share deep mathematical structure, then understanding one might illuminate the other.

For now, the practical takeaway is more modest but still significant. There exists a class of hardware, inspired by biology, that can solve the equations of physics at potentially revolutionary efficiency. The brain, it turns out, has been doing calculus all along. We just needed to build the right translator.

Sources

- ScienceDaily: "Brain inspired machines are better at math than expected"

- Inside HPC: "Sandia: Brain-Inspired Neuromorphic Computers 'Shockingly Good' at Math"

- Nature Machine Intelligence: "Solving sparse finite element problems on neuromorphic hardware" (2025; 7: 1845-1857)

- Sandia National Laboratories: "Nature-inspired computers are shockingly good at math"