In July 1997, a small, six-wheeled robot named Sojourner rolled off a landing platform on Mars and drove approximately one meter. Then it stopped and waited for instructions from Earth. Every command had to cross hundreds of millions of kilometers of space, arrive at the rover, be executed, and then confirmed by return signal. Sojourner's total driving distance over its 83-sol mission was about 100 meters. It moved with the pace and deliberation of a toddler learning to walk, and for good reason: no one had ever driven a robot on another planet before, and the consequences of a mistake were permanent.

Twenty-eight years later, in December 2025, Perseverance crossed the rim of Jezero Crater following a route it had, in a meaningful sense, chosen for itself. The gap between those two moments is the story of how humans learned to let go, gradually and reluctantly, of direct control over machines operating in places where rescue is impossible and second chances don't exist.

From Remote Control to Self-Direction

The evolution of Mars rover autonomy follows a pattern recognizable from other domains where humans have gradually ceded control to machines: aviation, manufacturing, medicine. The pattern involves three stages, each representing a different relationship between human decision-making and machine capability.

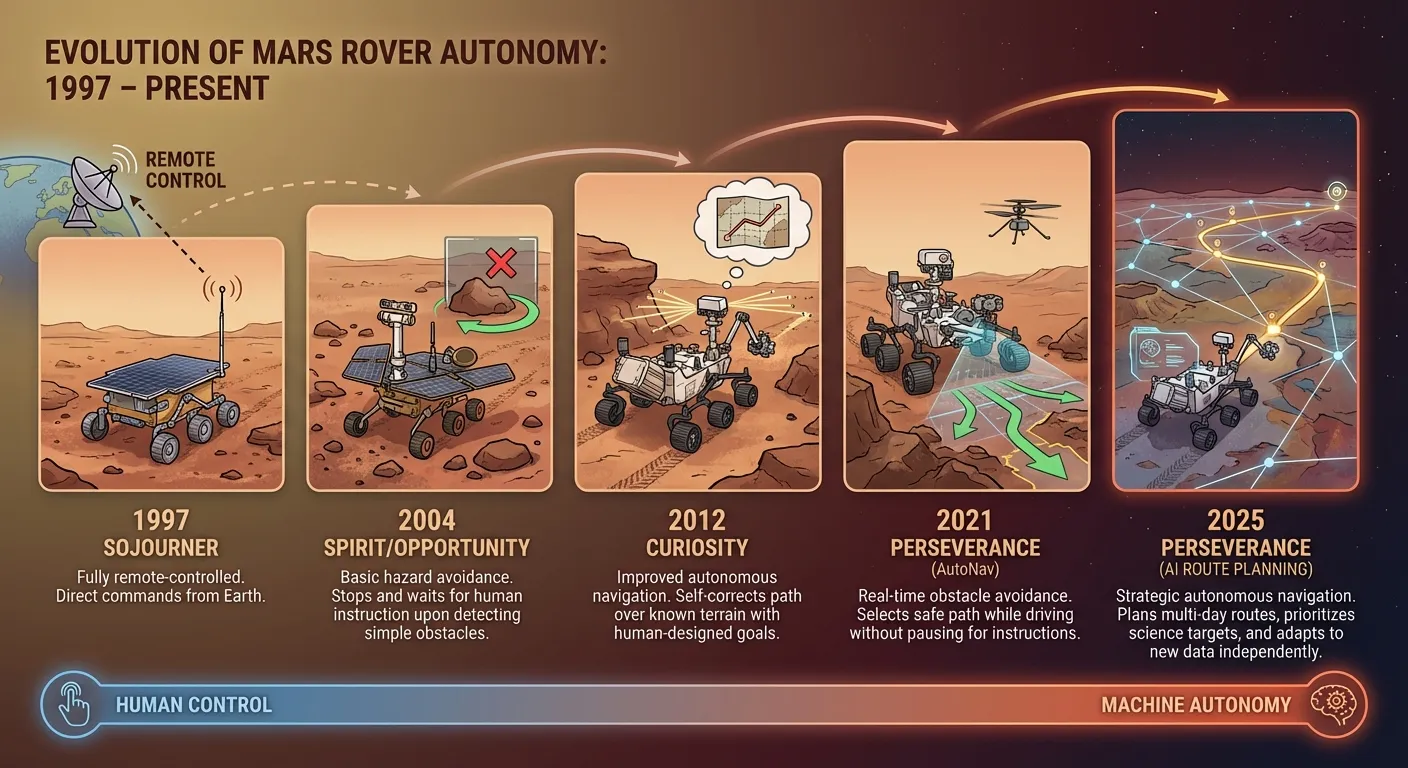

The first stage is remote control, where every significant decision is made by a human and transmitted to the machine. Sojourner operated this way. So did the early days of Spirit and Opportunity, the twin rovers that landed in 2004. Their human operators at JPL would study imagery from the previous sol, identify a target, plan a route, and upload detailed commands. The rover executed those commands and sent back results. If something unexpected appeared in the path, the rover stopped and waited for new instructions.

The second stage is supervised autonomy, where the machine makes certain categories of decisions independently while humans retain oversight of strategic direction. Perseverance's AutoNav system operates at this level. AutoNav uses the rover's own cameras to build 3D terrain maps, identify hazards, and navigate around obstacles without waiting for human input. The rover can drive itself through complex terrain, making hundreds of micro-decisions per meter about where to place its wheels. But the overall direction, the question of which general area to head toward, has remained a human decision.

The December 2025 AI-planned drives represent the threshold of a third stage: strategic autonomy, where the machine makes not just tactical decisions about obstacles but strategic decisions about destinations. The AI didn't just avoid rocks; it decided which general path across kilometers of Martian terrain offered the best combination of safety and scientific value. This is the kind of judgment that, until now, was considered exclusively human.

Tyler Del Sesto, who has worked on Perseverance's autonomous driving software for seven years, noted that the AI route planning leveraged vision-language models to analyze the same imagery human planners use, but processed it faster and considered more variables simultaneously. The AI doesn't get tired, doesn't have off days, and can hold the entire surface dataset in working memory while evaluating options. These are computational advantages that complement, rather than replace, human judgment.

The Speed of Light Problem

The fundamental driver of rover autonomy isn't technological ambition. It's physics. The speed of light imposes a hard constraint on how quickly information can travel between Earth and any robot operating elsewhere in the solar system.

For Mars, the communication delay ranges from about 4 minutes (when Mars and Earth are at their closest) to approximately 24 minutes (when they're on opposite sides of the Sun). A round-trip exchange, sending a command and receiving confirmation, takes 8 to 48 minutes. During certain periods, when Mars passes behind the Sun as viewed from Earth, communication is impossible for days.

These delays mean that real-time control of a Mars rover, the kind of joystick-and-monitor operation familiar from drone piloting, is physically impossible. Even at minimum delay, a human trying to steer a rover in real time would be reacting to imagery that was already 4 minutes old, making decisions about terrain the rover passed minutes ago. At maximum delay, the situation is absurd.

Every increase in rover autonomy is, at its core, a response to this physical constraint. AutoNav exists because waiting 20 minutes to ask "should I drive around this rock?" wastes an unacceptable amount of a limited mission timeline. AI route planning exists because spending 6 hours having humans plan a route that an AI can plan in minutes wastes an even larger amount.

The constraint only gets worse as exploration pushes deeper into the solar system. A rover on Titan, Saturn's largest moon, would face one-way communication delays of 67 to 84 minutes. On Europa, orbiting Jupiter, the delay is 33 to 54 minutes. For any mission to the outer solar system, the robot must be capable of operating independently for hours or days between communication windows. Strategic autonomy isn't optional for these missions. It's prerequisite.

What Ingenuity Taught Us About Autonomous Machines

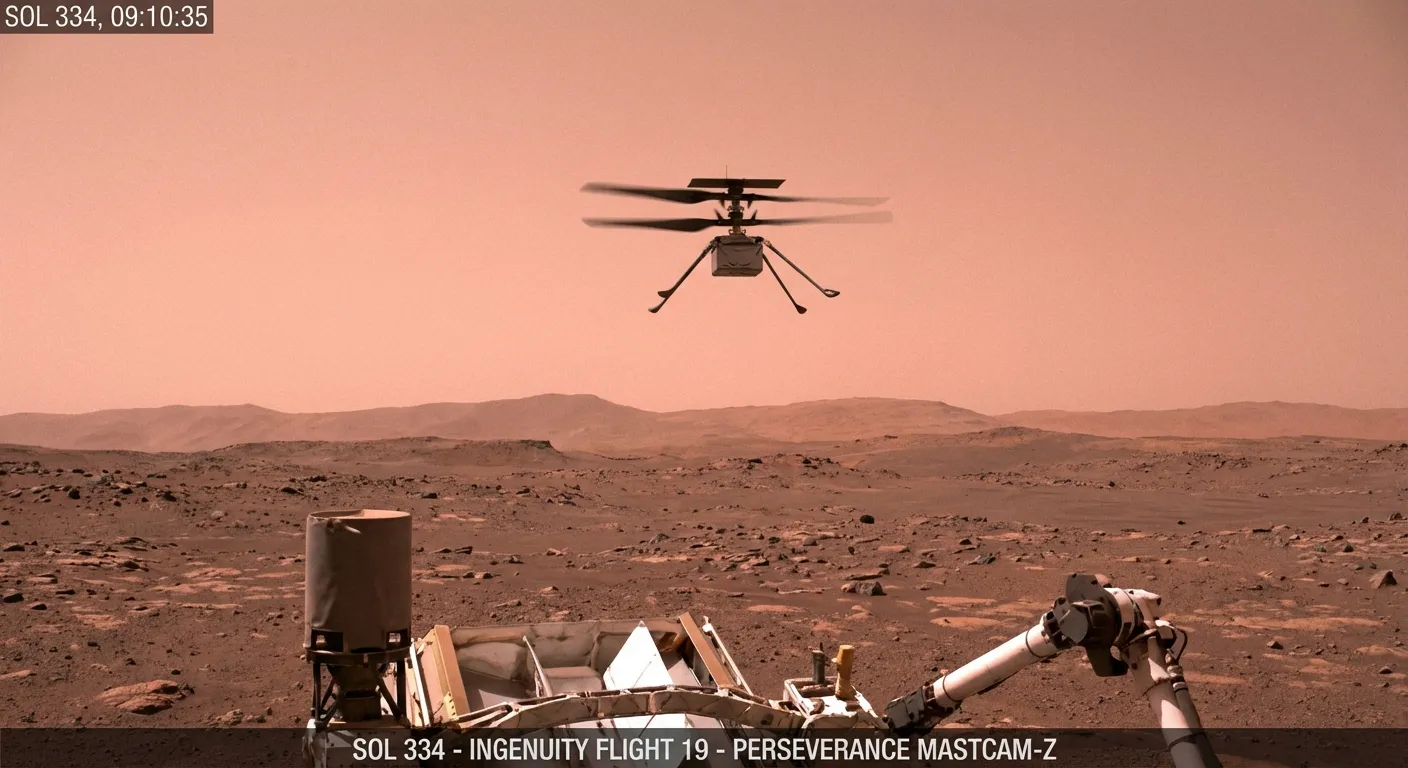

Before Perseverance demonstrated AI route planning, another machine on Mars proved that autonomous operation in alien environments was viable. The Ingenuity helicopter, a small rotorcraft that traveled to Mars attached to Perseverance's belly, flew 72 times between April 2021 and January 2024, covering a total distance of over 17 kilometers.

Every Ingenuity flight was autonomous. The communication delay made real-time piloting impossible, so each flight plan was uploaded in advance and executed independently. The helicopter's onboard computer processed camera imagery in real time, adjusting for wind, maintaining altitude, and navigating to designated waypoints. When conditions were too dangerous, such as excessive wind, the system made its own decision to abort.

Ingenuity was originally planned as a five-flight technology demonstration. It flew 72 times because NASA discovered that an autonomous machine, once proven reliable, could be used far more aggressively than originally planned. What started as a cautious experiment became a routine operational asset, scouting terrain ahead of Perseverance and photographing areas the rover couldn't easily reach.

The lesson was significant: autonomous systems on Mars don't just match human-directed operations. They can exceed them, because they aren't constrained by communication delays and can respond to conditions in real time. The same lesson applies, magnified, to autonomous driving.

The Next Steps Aren't on Mars

The capabilities being developed for Mars rovers are converging with another exploration challenge: subterranean navigation. Robots designed to explore lava tubes on the Moon and Mars face even more extreme autonomy requirements than surface rovers. Inside a lava tube, there is no GPS, no satellite communication, and no line-of-sight to any relay station. A robot in a tube must navigate, map, and make decisions about where to go in complete isolation.

The European multi-robot system recently described in Science Robotics, tested in lava caves on Lanzarote, addresses this challenge with a team of cooperating autonomous machines. Three robot types, a surface rover, a climbing robot, and a subterranean explorer, coordinate their actions to enter and map underground spaces without human input. The surface rover maintains a communication link to the outside world. The subterranean robots operate independently within the cave, sharing map data with each other through short-range communication.

This architecture, multiple autonomous agents coordinating without centralized human control, represents a further evolution beyond single-rover autonomy. Instead of one machine making decisions, multiple machines make decisions collaboratively, dividing tasks based on capability and sharing information to build a collective understanding of their environment.

The approach has precedent in biology. Social insects, from ants to bees, accomplish complex collective tasks through simple individual behaviors coordinated by local communication. No single ant plans the colony's foraging strategy. The strategy emerges from thousands of individual decisions, each informed by local conditions and signals from nearby individuals. Robotic exploration systems are beginning to follow a similar logic: distributed intelligence, local decision-making, emergent collective behavior.

Science Without Scientists Present

Perhaps the most profound shift in autonomous exploration is the move from "robots that go where we tell them" to "robots that decide what's scientifically interesting." Current rovers collect data that scientists on Earth analyze to determine the next steps. Future systems may integrate scientific analysis into the autonomous loop, allowing robots to identify features of interest, collect samples, and adjust their exploration strategy based on what they find, all without waiting for human input.

Early steps toward this capability are already operational. Perseverance carries an instrument called AEGIS (Autonomous Exploration for Gathering Increased Science) that uses onboard image analysis to identify rocks with unusual textures or compositions and autonomously point instruments at them for closer examination. AEGIS doesn't wait for scientists to spot an interesting rock in downloaded imagery. It spots the rock itself and begins analysis immediately.

Scaling this approach to entire mission campaigns is the logical next step. An autonomous rover equipped with advanced AI could land on an unexplored world, survey its surroundings, identify the most scientifically promising terrain, plan a route to reach it, collect and analyze samples, and transmit synthesized results to Earth. Instead of receiving raw data and deciding what to do next, human scientists would receive interpreted findings and decide what questions to pursue further.

This model doesn't eliminate human scientists. It changes their role from tactical directors to strategic partners. Rather than spending hours deciding where the rover should drive tomorrow, scientists would spend their time interpreting results, formulating hypotheses, and setting high-level objectives. The AI handles the daily operations. The humans handle the questions that require the kind of conceptual reasoning that AI has not yet mastered.

The Trust Gradient

The history of AI decision-making across domains shows a consistent pattern: humans initially resist ceding control to machines, then gradually relax as evidence accumulates that the machines are reliable. Aviation followed this arc: early autopilot systems were distrusted, then tolerated, then embraced, until modern aircraft are flown by automation for the vast majority of each flight.

Space exploration is following the same arc, compressed into decades rather than the century aviation required. The next generation of explorers may operate for weeks or months between communication sessions with Earth, making all operational decisions independently. For missions to Europa or Titan, where round-trip communication takes hours, this isn't a luxury. It is the only operational model that works.

The key variable isn't capability; it's consequences. NASA can accept higher autonomy risks for a Mars rover than for a crewed spacecraft because the consequences of failure are measured in lost science rather than lost lives. This asymmetry means robotic exploration will always be at the leading edge of the trust gradient, testing levels of machine autonomy that won't be applied to crewed missions for years or decades afterward.

The framework emerging at JPL, layering AI planning with human verification and onboard safety systems, provides a template for expanding that trust incrementally. Each successful demonstration builds evidence that enables the next step. Ten successful AI-planned drives justify attempting longer drives with less verification. A hundred successful drives might justify full strategic autonomy for specific terrain types. A thousand might justify it universally. The evidence accumulates one sol at a time, on a planet where patience is not optional.

The Deeper Question

When a machine explores another world without human direction, who is the explorer? The question isn't philosophical ornament. It has practical implications for how we value and fund space exploration.

Part of the appeal of space exploration has always been human aspiration: the idea that going to other worlds expresses something fundamental about who we are as a species. The Apollo missions were celebrated not just for their scientific returns but for the human achievement they represented. People went to the Moon. That mattered in a way that transcended science.

Autonomous machines complicate this narrative without invalidating it. No human has set foot on Mars. Everything we know about the Martian surface comes from robots. The scientific returns from Mars rovers have been extraordinary: evidence of ancient water, organic molecules in rocks, atmospheric measurements that inform our understanding of climate evolution. These discoveries belong to humanity even though no human was present to make them.

What changed in December 2025 is that the robot making discoveries on Mars also made the decision about where to look for them. The human role shifted from "director" to "reviewer." The scientific results are no less valuable for having been found by a machine that chose its own path. But the relationship between human intent and robotic execution has become more complex, more layered, and more interesting than the simple master-tool relationship that characterized early missions.

The partnership between human curiosity and machine capability is not a replacement for human exploration. It's the only form of exploration currently possible across most of the solar system. Every moon of Jupiter, every crater on Mercury, every valley on Titan will be visited first by a machine that must think for itself. And with each generation of increasingly autonomous robots, the line between tool and collaborator grows harder to draw.

The machines that explore for us are becoming better explorers. Whether that makes them more like tools or more like partners is a question that each new mission helps answer. For now, the answer is both. And the frontier keeps moving.

Sources

- NASA JPL: Perseverance Rover Completes First AI-Planned Drive on Mars

- Science Robotics: Autonomous robotics is driving Perseverance rover's progress on Mars

- Science Robotics: Cooperative robotic exploration of a planetary skylight surface and lava cave

- NASA JPL: Autonomous Systems Help Perseverance Do More Science on Mars