Unless you follow AI news closely, DeepSeek might have appeared on your radar seemingly overnight. The Chinese AI company has been making waves by producing large language models that compete with ChatGPT and Claude, but at a dramatically lower cost and with an open-source approach that's shaking up the industry. With DeepSeek V4 rolling out this month, it's worth understanding what this company actually does and why it matters.

The quick answer: DeepSeek is a Chinese artificial intelligence company that builds large language models (the technology behind chatbots like ChatGPT). What makes it notable is that DeepSeek claims to train its models for a fraction of what American competitors spend, releases its model weights publicly so anyone can use them, and has produced AI systems that perform comparably to models from OpenAI and Anthropic on many benchmarks.

How DeepSeek Got Here

DeepSeek was founded in July 2023 by Liang Wenfeng, who also runs High-Flyer, a Chinese quantitative hedge fund. The company is headquartered in Hangzhou, China, and unlike most AI startups, it didn't need to raise venture capital. High-Flyer's profits funded the operation, giving DeepSeek an unusual degree of independence from the investor-driven timelines that shape many Silicon Valley AI companies.

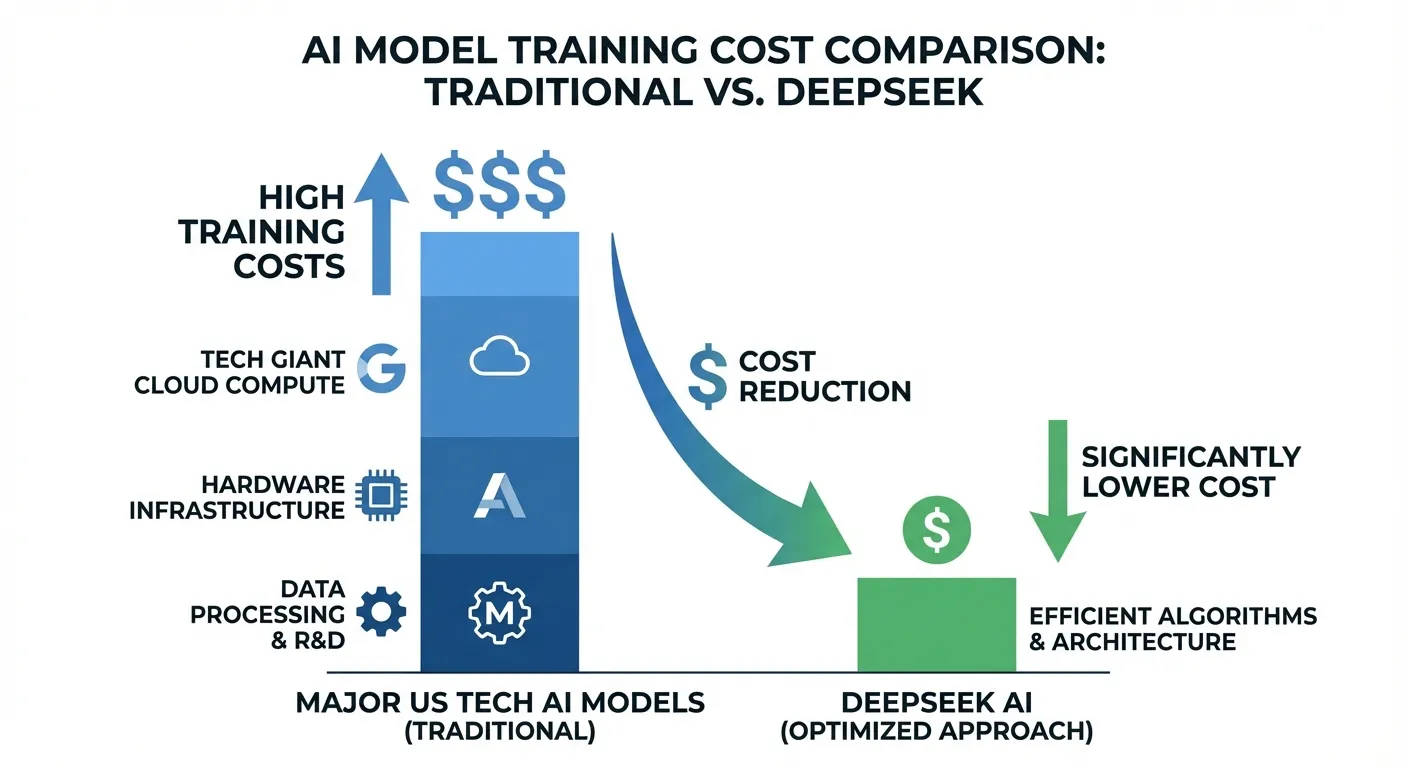

The company first grabbed global attention in January 2025 when it released DeepSeek-R1, a reasoning model that performed comparably to OpenAI's o1 on multiple benchmarks. What stunned the AI community wasn't just the performance but the price tag. DeepSeek claimed it trained R1 for roughly $6 million, while estimates for training OpenAI's GPT-4 in 2023 exceeded $100 million. Even accounting for potential differences in how costs were calculated, the gap was striking.

That cost efficiency wasn't accidental. DeepSeek developed its models under real constraints. U.S. trade restrictions on advanced AI chip exports to China meant the company couldn't access NVIDIA's most powerful hardware. Instead, DeepSeek's engineers found ways to achieve competitive results using less powerful chips and fewer of them, developing novel training techniques that the rest of the industry is now studying closely.

The Key Models: R1, V3, and V4

DeepSeek maintains several AI models, each designed for different tasks:

DeepSeek-V3 is the general-purpose conversational model, comparable to ChatGPT or Claude for everyday tasks like writing, answering questions, analysis, and coding. It uses a Mixture-of-Experts (MoE) architecture, which means the model only activates a portion of its total parameters for any given task, making it more efficient than models that use all their capacity for every query.

DeepSeek-R1 is the reasoning specialist. Like OpenAI's o1 or o3, it's designed to "think through" complex problems step by step before answering. R1 excels at math, logic puzzles, and coding challenges where the model benefits from extended reasoning chains. It was released under the MIT License, meaning anyone can use, modify, and build upon it freely.

DeepSeek V4, currently rolling out in mid-February 2026, represents the company's biggest technical leap yet. It introduces three architectural innovations that set it apart from previous models. The first is DeepSeek Sparse Attention (DSA), which reduces computational costs by roughly 50% compared to standard attention mechanisms. The second is Engram Conditional Memory, a system that lets the model selectively retain information based on task context, improving how it handles large codebases. The third is a context window exceeding one million tokens, meaning V4 can process an entire large codebase in a single pass.

V4 appears heavily optimized for coding tasks. Early reports suggest it can diagnose bugs spanning multiple files, trace execution paths across an entire repository, and propose fixes that account for dependencies between components. DeepSeek claims a 90% score on HumanEval coding benchmarks, though independent verification is still pending.

How DeepSeek Compares to ChatGPT and Claude

The comparison depends heavily on what you're using AI for. On standardized benchmarks, DeepSeek's models trade leads with ChatGPT and Claude across different tasks. R1 performs comparably to OpenAI's o1 on mathematical reasoning. V3 holds its own against GPT-4o on general conversation and analysis. V4's coding capabilities may surpass current competitors, though those claims remain unverified.

Where DeepSeek differs most is in accessibility and cost. Using DeepSeek's models through their API costs significantly less than equivalent OpenAI or Anthropic API calls. For developers building applications, that cost difference is meaningful. DeepSeek's open-weight approach also means you can download the models and run them on your own hardware, something you can't do with ChatGPT or Claude.

Consumer hardware requirements for V4 are surprisingly modest by AI standards: two NVIDIA RTX 4090 GPUs or a single RTX 5090 can run the model locally. That's expensive for an average consumer but accessible for businesses and developers who want to avoid ongoing API costs or keep their data entirely in-house.

On the other hand, ChatGPT and Claude have advantages in user experience, safety features, and integration with other tools. OpenAI's ecosystem includes DALL-E for image generation, advanced voice mode, and deep integration with Microsoft products. Anthropic's Claude offers strong performance in lengthy document analysis and has built a reputation for careful, nuanced responses on sensitive topics.

The Privacy and Security Question

DeepSeek's Chinese origins raise legitimate questions about data privacy that are worth taking seriously. Data entered into DeepSeek's online chatbot is processed on servers in China, subject to Chinese data privacy laws, and potentially accessible to Chinese authorities under the country's national security framework. In early 2025, several countries and organizations began restricting DeepSeek's use on government devices, and some companies have added it to their list of unauthorized AI tools in the workplace.

That said, context matters. If you're using DeepSeek's open-weight models downloaded to your own hardware, your data never leaves your machine. This is one of the key advantages of the open-source approach: you get the model's capabilities without sending your queries to anyone's servers. For developers and businesses with sensitive data, local deployment eliminates the data sovereignty concern entirely.

For casual use, the privacy calculus is similar to any cloud-based AI service. Don't enter passwords, financial details, proprietary business information, or anything you wouldn't want a third party to see. This advice applies equally to ChatGPT, Claude, or any other AI chatbot, but DeepSeek's jurisdiction in China adds an additional layer of consideration for users in countries with tense political relationships with China.

Security researchers have also flagged concerns about DeepSeek's content moderation. The model is subject to Chinese content regulations, which means it may refuse to discuss certain political topics or provide different answers on subjects sensitive to the Chinese government, such as Taiwan, Tiananmen Square, or Xinjiang. On most everyday questions, this won't affect your experience, but it's worth knowing the limitation exists.

Should You Actually Use DeepSeek?

The answer depends entirely on your use case and risk tolerance.

DeepSeek makes the most sense if you're: a developer looking for a powerful, low-cost AI model for coding tasks; a business that wants to run AI locally without sending data to external servers; a researcher or tinkerer who wants to experiment with state-of-the-art models on consumer hardware; or a budget-conscious user who wants ChatGPT-level performance without a subscription fee.

You might want to stick with ChatGPT or Claude if you're: handling sensitive personal or business data and prefer U.S. or European data jurisdiction; looking for the most polished consumer experience with mobile apps and integrations; working in a regulated industry (healthcare, finance, government) where data handling requirements are strict; or if you need consistent, unfiltered responses on geopolitically sensitive topics.

For most people asking "should I try DeepSeek?", the honest answer is: it's worth experimenting with, especially if you already use ChatGPT and want to compare results. The free chatbot at chat.deepseek.com gives you a no-risk way to test it. Just be mindful of what information you enter, the same way you should be with any AI chatbot.

What DeepSeek Means for the AI Industry

DeepSeek's impact extends beyond any single product. The company proved that you don't necessarily need billions of dollars and the latest hardware to build competitive AI models. That finding has implications for the entire industry.

For consumers, more competition generally means better products and lower prices. OpenAI dropped prices and accelerated feature releases partly in response to DeepSeek's emergence. Anthropic and Google have similarly adjusted their strategies. The days when a handful of companies could charge premium prices for AI access are likely numbered, and DeepSeek's open-weight approach ensures that even if the company disappeared tomorrow, the models and techniques would remain available.

For governments and policymakers, DeepSeek illustrates that export restrictions on AI chips didn't prevent China from developing competitive AI. Instead, the constraints may have forced Chinese researchers to develop more efficient approaches that now benefit the broader field. The geopolitical implications are complex and evolving, but the technical reality is that the AI capability gap between the U.S. and China is smaller than many expected.

Geoff Hinton, the Nobel Prize-winning AI researcher often called the "godfather of AI," described DeepSeek's efficiency advances as "a wake-up call" for Western AI labs that had been relying heavily on scaling up hardware rather than innovating on training methods. Whether you view DeepSeek as a competitor, a collaborator, or a concern, it's clearly one of the most significant developments in AI over the past two years.

The Short Answer

DeepSeek is a Chinese AI company that builds large language models rivaling ChatGPT and Claude at substantially lower cost. Its models are open-weight, meaning you can download and run them locally. The latest release, DeepSeek V4, is particularly strong at coding tasks and can process over a million tokens of context. The tradeoff: data entered into DeepSeek's online chatbot is processed in China, and the model is subject to Chinese content regulations. For privacy-conscious users, the open-weight option of running models locally eliminates the data sovereignty concern. For everyone else, it's worth trying the free chatbot to see how it compares to whatever AI tool you currently use.

Sources

- DeepSeek, Wikipedia

- DeepSeek V4: Everything We Know About the Upcoming Coding AI Model, WaveSpeedAI

- DeepSeek R4 AI Model 2026: The $6M Threat Returns, RedHub.ai

- DeepSeek Kicks Off 2026 with Paper Signalling Push to Train Bigger Models for Less, South China Morning Post

- GLM-5, SeedDance 2.0, and DeepSeek V4: Capabilities, Specs, and Why They Matter in 2026, NerdBot