For more than sixty years, the Milgram experiment has been shorthand for a terrifying idea: ordinary people will hurt strangers simply because an authority figure tells them to. It is one of the most cited studies in the history of social science, a staple of every introductory psychology course, and the go-to reference whenever someone needs to explain how atrocities happen. The conclusion felt settled. People obey.

But in March 2026, researchers David Kaposi and David Sumeghy published a study in the journal Political Psychology that may unravel that entire narrative. They did something no one had done systematically before: they listened to 136 of Stanley Milgram's original audio recordings, preserved for decades at Yale University Library. What they found was not obedience. Not one single participant who completed all the shocks actually followed the rules of the experiment. The real story, it turns out, is not about compliance. It is about entrapment.

The Experiment Everyone Thinks They Know

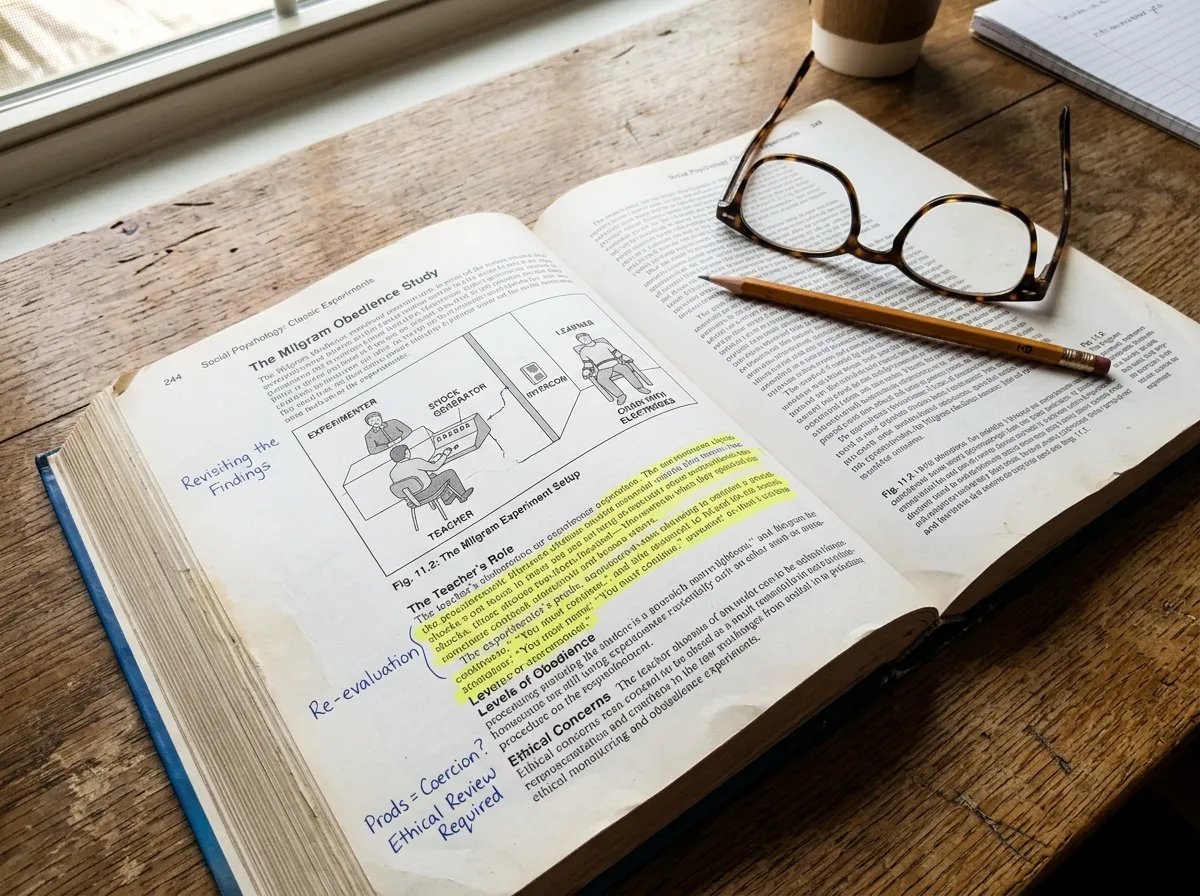

In 1961, Yale psychologist Stanley Milgram recruited volunteers for what he described as a study on learning and memory. Each participant played the role of "teacher," paired with a "learner" (actually an actor) in an adjacent room. When the learner gave wrong answers to word-pair questions, the teacher was instructed to deliver electric shocks of increasing voltage, from 15 volts up to a maximum of 450. The shocks were fake, but the screams were real, prerecorded and played through the wall with escalating intensity.

The results stunned the scientific community. In the baseline condition, 65% of participants went all the way to the maximum shock. Milgram concluded that ordinary people, placed under the authority of a legitimate institution, would commit acts of serious harm. The study became a lens through which the world understood the Holocaust, Abu Ghraib, and every instance of institutional violence where someone "was just following orders."

The interpretation has been challenged before, on ethical grounds, on methodological grounds, on the question of whether participants truly believed the shocks were real. But those challenges nibbled at the edges. The core claim, that most people obeyed, survived because nobody had gone back to check what actually happened in the room.

What the Tapes Reveal

Kaposi, a psychologist at The Open University in the UK, and Sumeghy spent years cataloging and analyzing the surviving recordings. They focused on four experimental conditions that closely matched Milgram's baseline setup, the version most commonly cited in textbooks. Their method was straightforward: they listened to every session and tracked whether participants followed the experiment's five-step protocol.

The protocol required teachers to complete five steps before each shock: read a test question to the learner, evaluate the learner's answer, announce the voltage level, press the shock lever, and read the correct answer aloud. This sequence was not optional. It was the scientific procedure that gave the experiment its legitimacy as a study of learning and memory.

Here is what the tapes showed: not a single fully obedient participant, not one of the people who went all the way to 450 volts, completed those five steps correctly from start to finish. Across all obedient participants, 48.4% of their shock sequences contained at least one procedural violation. They skipped steps, performed them out of order, or executed them incorrectly. Among disobedient participants (those who eventually refused to continue), the violation rate during their compliant phases was still 30.6%.

The most common violation was striking. Participants read the next test question directly over the learner's simulated screams, making it impossible for the learner to hear or answer correctly. This guaranteed the learner would get the next question wrong, which guaranteed another shock. The teachers were not following a procedure. They were trapped in a collapsing loop.

The Silence That Changed Everything

The critical finding is not that participants broke rules. It is what happened when they did: nothing.

Milgram's experimenter, the authority figure in the lab coat, was supposed to enforce the protocol. That was the entire point of the setup. The experiment's legitimacy rested on the idea that participants were following a structured scientific procedure under the direction of a credible institution. But when teachers started skipping steps, reading over screams, or mangling the protocol in ways that made the "learning study" meaningless, the experimenter said nothing.

This silence, Kaposi and Sumeghy argue, is the key to understanding what actually happened. The experimenter's failure to correct violations meant the experiment's stated purpose, studying how punishment affects learning, had quietly dissolved. What remained was raw pressure to continue shocking, stripped of any scientific justification. The participants who kept going were not obeying a legitimate authority. They were stuck in a situation that had lost its rationale but retained its coercive force.

"The experiment requires that you continue," the experimenter would say when participants hesitated. But the experiment, as a coherent scientific protocol, had already fallen apart. What Milgram may have captured, the researchers suggest, was not obedience. It was something closer to entrapment in a situation that no longer made sense.

Who Resists, and Why

The tape analysis also complicates the picture of who continued and who refused. The traditional Milgram narrative suggests that resisters were somehow morally exceptional, rare individuals who stood up to authority while the majority caved. The new data suggests something different.

Participants who continued to the end tended to be agreeable and conscientious, the kind of people who follow rules in everyday life. They were cooperative rule-followers caught in a situation where cooperating had become destructive. Their very agreeableness, the trait that makes someone a good colleague, a reliable neighbor, a pleasant person to be around, was what kept them pressing the switch.

Those who stopped, by contrast, tended to ask a particular kind of question. Not "I don't want to do this" (though many said that), but "Does this still make sense?" They noticed the protocol had become incoherent, that the "learning study" was no longer studying learning, and they used that recognition as their exit. The protocol's legitimacy was not what enabled harm. It was what made stopping possible.

This reframes resistance not as exceptional moral courage but as a specific cognitive skill: the ability to recognize when a situation's justification has evaporated, even when the pressure to continue has not. It is a skill that connects to what psychologists call "situational awareness," the capacity to step back from the immediate demands of a scenario and evaluate whether the whole thing still holds together. Research on how daily habits bypass conscious thought suggests this kind of reflective pause is harder to trigger than we might assume.

Why It Took Sixty Years

A reasonable question: if the tapes were sitting at Yale this whole time, why did it take until 2026 for someone to listen to them systematically?

Part of the answer is practical. The recordings are analog, stored on deteriorating media, and analyzing 136 sessions requires hundreds of hours of careful listening and coding. But part of the answer is also about how powerful narratives sustain themselves. The "obedience to authority" conclusion was so clean, so useful, and so disturbing that it became self-reinforcing. Textbooks repeated it. Popular books amplified it. The study entered common knowledge as a settled fact, and settled facts attract less scrutiny than open questions.

Milgram himself contributed to this. His published accounts emphasized the obedience rate and the distress of participants, not the procedural chaos that the tapes reveal. He was telling a story about human nature, and the messy details of how the experiment actually unfolded did not fit that story. The recordings, which might have complicated the narrative from the beginning, sat in an archive while the simplified version circled the globe.

This pattern, where a study's conclusion becomes more famous than its data, is not unique to Milgram. The Stanford Prison Experiment, another pillar of social psychology's "people are capable of terrible things" canon, has undergone similar revisionism. The broader lesson may be that the most influential studies in psychology are the ones most vulnerable to mythologization, precisely because their conclusions are so compelling that nobody wants to check the details.

The Deeper Question

If Milgram did not capture obedience, what did he capture?

Kaposi and Sumeghy's answer is both less dramatic and more useful than the original. What the experiment showed, they argue, is how coercive environments operate when their stated purpose collapses. The participants were not blindly following orders. They were caught in a dynamic where the authority figure refused to acknowledge that the situation had broken down, and where the social pressure to continue existed independently of any rational justification for continuing.

This is, in some ways, a scarier conclusion than Milgram's. "People obey authority" is a problem with a clear solution: question authority. But "people get trapped in deteriorating situations because the pressure to continue persists after the reasons to continue have disappeared" is harder to address. It implicates not just authority but inertia, social awkwardness, the difficulty of stopping once you've started, and the very agreeableness that makes social life possible.

The practical implications extend far beyond psychology laboratories. Workplace cultures where employees continue following procedures that everyone privately acknowledges are broken. Military operations that persist after their strategic rationale has evaporated. Bureaucratic systems that keep demanding compliance with rules that no longer serve their original purpose. In each case, the Milgram pattern, as Kaposi and Sumeghy describe it, applies: the structure's legitimacy has dissolved, but its coercive force remains.

Understanding this distinction matters because the interventions are different. If the problem is obedience, you teach people to question authority. If the problem is entrapment, you teach people to recognize when a situation's justification has collapsed, and you build systems that make it easier to stop. The first is a moral lesson. The second is a design problem. And design problems, unlike moral failings, can actually be solved.

Where This Leads

The Milgram experiment is not going to disappear from textbooks. It remains one of the most important studies in the history of social science, not because its original interpretation was correct, but because the data it generated continues to yield new insights sixty-five years later. What changes is the lesson.

The old lesson was: be afraid of how easily people obey. The new lesson, drawn from 136 audio tapes that sat in a Yale archive gathering dust, is both more nuanced and more actionable. People do not simply obey. They get stuck. They continue not because they are following orders, but because stopping feels harder than going on, especially when the person in charge refuses to acknowledge that the whole thing has gone sideways. The good news is that the people who do stop tend to share a recognizable trait: they ask whether the situation still makes sense. That is not moral heroism. It is a question anyone can learn to ask.

Sources

- PsyPost: Audio tapes reveal mass rule-breaking in Milgram's obedience experiments

- Psychology Today: Why Ordinary People Do Terrible Things

- David Kaposi & David Sumeghy, "Audio tapes challenge Milgram obedience findings," *Political Psychology* (2026), DOI: 10.1111/pops.70112

- Boing Boing: Audio tapes challenge Milgram obedience findings